Guest Article by Sarenne Wallbridge

The application of artificial intelligence to bio-signal analysis is one of the hottest topics in medical and biological research at the moment. People already benefit from this approach through its application to technologies like MRIs or ECGs but the potential to realise further benefits is enormous. We have only touched the tip of the iceberg…

Vivent and its collaborators are at the forefront of applying this technology to plants and we are often asked about our approach. This short article by recent Informatics graduate, Sarenne Wallbridge, explains some key concepts of artificial intelligence. We will follow up with more details on machine learning in future blog posts but feel free to contact us if you’d like to work together.

Disclaimer: In recent years, Artificial Intelligence (AI) and Machine Learning have exploded in popularity in almost every field of data processing, and for good reason. They are highly effective and offer many benefits through their application. Their success has propelled AI from niche, academic beginnings to buzzwords cropping up in many tech articles and on LinkedIn profiles. Though there are many ways to define these concepts, this brief article provides a high-level overview of AI and Machine Learning.

AI can often seem like a futuristic concept, however the field has existed for well-over 50 years. Though its exact inception is debated, many credit the birth of AI to the Godfather of Computer Science – Alan Turing – in his paper ‘Computing Machinery and Intelligence’ in which he asked the pivotal question ‘Can machines think?’. To this end, he created the Turing test where an interrogator is tasked with differentiating between a human and a machine using only conversation.

AI has since evolved to encompass many more fields – from computer vision to pattern recognition, and natural language processing to robotics. Given the broad spectrum of the field’s applications, definitions of AI are murky at best. One of the most popular definitions comes from another co-founder of AI, John McCarthy, as ‘the science and engineering of making intelligent machines, especially intelligent computer programs’ but this still leaves lots of room for interpretation.

Rather than focusing on definitions, let’s consider something more concrete – implementation. AI can be divided into clear 2 camps: symbolic and connectionist.

The symbolic approach dominated the field of AI from its inception until the 1980’s and stems from an assumption that intelligence can be achieved through the manipulation of symbols with hard-coded rules. This allowed some important aspects of human knowledge to be leveraged, but only in restricted problem-domains that can be entirely condensed into a set of symbols.

Modern AI is much more heavily rooted in Connectionism which takes an entirely different approach based on training networks of weighted connections with examples — essentially a process of trial and error. Such methods have found their way into many people’s everyday lives. You may have experienced targeted advertising on websites like Amazon, face recognition on a smart phone’s camera, or personalised health tracking on a fitness device. These applications and others are driving a large portion of AI research to focus on the architectural design of such networks and how best to train them.

This is where Machine Learning comes in. Though not its sole purpose, much Machine Learning involves the training of connectionist models. Though state-of-the art methods can be extremely complex, at their core, all ML methods can be broadly separated into 3 models of learning used for different sets of tasks: supervised, unsupervised, and reinforcement.

- Supervised = prediction. Given a dataset of correct input-target pairs, a machine learning model can be trained to make predictions about other examples, such as a label (classification) or a value (regression).

- Unsupervised = pattern-finding. Rather than predicting a target, this this learning model involves predicting how the training input was generated and the patterns between inputs. This includes tasks like clustering or dimensionality reduction.

- Reinforcement = goal attainment. This is a newer branch of machine learning that encapsulates features from both supervised and unsupervised learning ; rather than providing a model with input-target pairs, the model is provided with reward functions to quantify its performance and trained to maximise its long-term reward. Generally, this type of learning is used in settings requiring sequential decision making, like DeepMind’s AlphaGo agent.

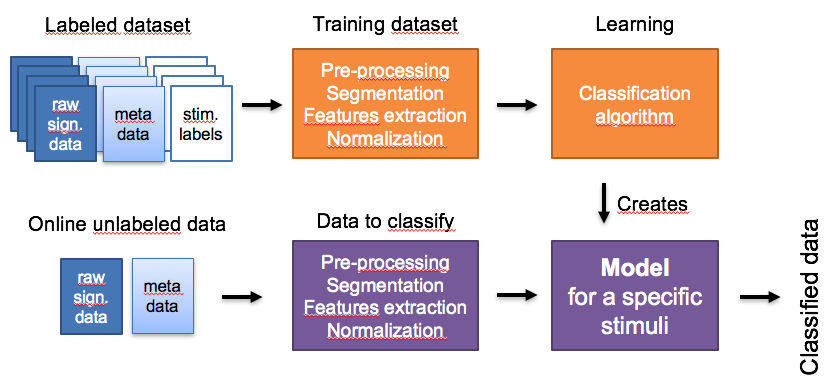

Here are Vivent when we are analysing plant signals we use a mix of simple and sophisticated statistics and machine learning. In our research we are trying to link specific plant physiology with emitted electrical signals to better understand how plants respond to their environment. Sophisticated statistics can often identify these correlations. However, we also want to be able to identify environmental stimuli, for instance insect attack or a fertiliser system breakdowns so that plant growers can quickly intervene to provide the optimum conditions for their crops. For this application supervised machine learning is much more effective at finding distinctive electrical signals linked to specific conditions that plants experience.

Like people and animals, plants adapt to their environment so do not make the same response to the same stimuli each time. In this case unsupervised machine learning is used and we are achieving impressive results – predicting some stimuli with 99% accuracy.

Machines can see things that we can’t whether through calculations on large data sets using standard statistical methods or much more complex machine learning algorithms. We are very excited about working with these powerful techniques to enable more sustainable food production.